The role of error in organizing behaviour

This is a “classic paper” as the journal puts it by Jens Rasmussen. He goes over a few different contexts in which the concept of error is important. From these he shows that “error” is not its own class of behavior, thus studying it as such doesn’t make sense. Instead he advocates for examining the cognitive things happening that affect behavior.

Even in situations where work is relatively simple, where error in that context is well defined Rasmussen tells us that:

we are dealing with a human link in an extended chain of events; the “error” is a link in the chain, in most cases not the origin of the course of events.

In complex systems, the world is a dynamic flow, which on its own can’t be explained in a causal way. In order to do that we need to look at individual events. The trouble is that individual events are created from that dynamic flow through decomposition. This decomposition is incredibly subjective though and different people will come up different events in the chain.

The resulting explanation will take for granted his or her frame of reference and, in general, only what he or she finds to be unusual will be included: the less familiar the context, the more detailed the decomposition.

This raises the question, when do you stop as trace events upstream? Each person has their own definition. Even when they agree it’s possible that different groups, organizations, or professional communities will do it differently at different times. (We’ve talked about this before)

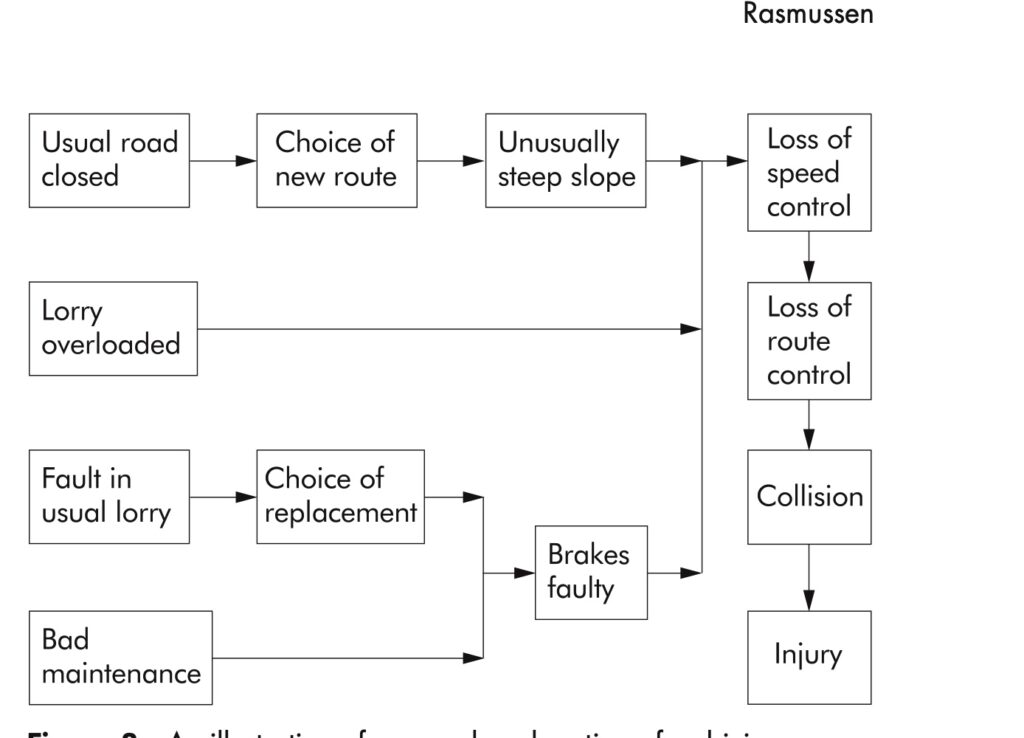

Rasmussen provides us a diagram to help:

“Note that only abnormal or unusual events together with violations of rules are included”

As we as humans have a tendency to see what we expect, Rasmussen correctly predicts that while at one point technical faults were “in focus” as causes, then human errors, future focus would move upstream to designers and managers. This shifting of focus isn’t necessarily making anymore more clear or answering any more questions, it’s just changing where one looks based on what was expected.

Stop rules exist of course, which is why no one gets stuck in a loop forever analyzing events all the way back to the big bang. They’re not usually created in advance though and are usually implicit. Searches of the upstream events tend to get terminated in 1 of 3 ways:

- If the causal path can no longer be followed because information is missing.

- When a familiar, abnormal event is found to be a reasonable explanation.

- If a cure is available.

Notice that none of those reasons necessarily correlate with insight having been gained or learning haven taken place. We talked a bit about point 3 before.

“identification of accident causes is controlled by pragmatic, subjective stop rules. These rules depend on the aim of the analysis, i.e. whether the aim is to explain the course of events, to allocate responsibility and blame, or to identify possible system improvements in order to avoid future accidents.”

Seeking explanation

If the goal of an analysis is to find some way to explain an accident, then stop reason 2 is likely to be followed, the backtracking will take place until some cause is found that is familiar to the analyst.

Rasmussen provides an example that if a technical component were to fail, that will be accepted as the “prime cause” if the failure of that type of component seems to be “as usual.” There may be further searching if the analyst believes the consequences of the fault make the choice of that component unreasonable or perhaps further search if a reasonable operator could have stopped the process if they were somehow trained better or more aware. In these cases one could find a design, manufacturing, or operator error, depending on which search was performed.

“Human error is, particularly at present, familiar to analysts: to err is human, and highly skilled people will frequently depart from normative procedures”

Allocating responsibility

When one is instead doing an analysis to “allocate responsibility” (more likely placing blame), then the tracing of the events will tend to search for two things: a person who made an error and at the same time was “in control” of their behavior. As Rasmussen says: “This is unfortunate because they may have very well been in a situation where they do not have ‘control’”

The response of operators, inadequate or otherwise, to events that are unfamiliar depends a lot on what they’ve experienced previously through the course of normal work. That experience, and thus an operator’s behavior is conditioned by the choices made by those who planned or managed the work. Rasmussen argues that from this perspective, those managers are more “responsible” that an operator that is the dynamic flow of events.

But it’s unlikely that the decisions of designers or managers will be considered when a causal analysis takes place because those are “normal events” and are not directly in the path of that dynamic flow. Rasmussen gives a warning which I think all of us in software could heed and many of us have been struck by or even accidentally inflicted on others:

“There is considerable danger that systemic traps can be arranged for people in the dynamic course of events”

Analysis for system improvements

When I work with software teams, especially with SRE slants, I find most teams believe this is what causal analysis is for: to find some way to make the system better. This sort of analysis takes a different focus and a different stop rule will be applied. In this case, the question of whether or not a cure is known for a given problem will dictate the stop rule. Most often the cure will be associated with events that are thought to be root causes.

Though typical analysis and explanation focuses on unusual events, the causal chain could be broken by changing normal events as well. This means that when we decompose this flow, whether for our time lines, post incident reviews, etc… we should include normal events as well.

These different types of analysis with different stop rules also means that if some analysis is done with one purpose in mind, it likely won’t apply for some other purpose. This is important to to keep in mind when doing incident review or other retrospectives.

Adaptation and the organizing of work

Rasmussen also covers how people adapt and how they work. Learning on the job is likely going to be a self-organized, evolutionary practice that is optimized against each individual’s personal criteria as it fits within the larger boundaries of resources available or other task constraints. Whatever the optimizations are are going to be very much influenced by the culture or norms of the organization or team.

Practice, the chance to experiment, is required in order to find shortcuts or smoother ways of doing things. This means that:

“effective, professional performance depends on empirical correlation of cues to successful acts. Humans typically seek the way of least effort. Therefore, it can be expected that no more information will be used than is necessary for discrimination among the perceived alternative for action in any particular situation”

So given a large range of actions, they get steadily narrowed, both through practice and decision making. This is a good thing, in that it allows the development of more skilled performance, but it also opens up the door for “error” where the situation has changed or some fault makes cues unavailable or otherwise defies expectations.

There are two general types of errors in this situation. One where one tests a hypothesis given the cues and finds it to not hold up. The other where the actions chosen based on the same cues causes the perceived alternatives to seem unreliable. These are both effects of adaptation, they can’t really be gotten rid of in complex systems where the work is always underspecified and left to humans to fill in the gaps.

Rasmussen gives an example where some sort of local adaptation ends up conflicting with a delayed, conditional effect is when working with instructions that specify a specific order or that makes certain orders of actions not acceptable in the presence of abnormal conditions. The instructions will prescribe a certain sequence. But if this instruction goes against the persons previous process criteria, then it’s very likely they’ll modify the procedure and no adverse effect is immediately visible.

This could be the case if someone has to go back and forth between remote locations because in rare cases this sequence is safer. Their “process criterion” will very quickly teach them to batch actions at each location, which in most cases will have no effect, only in the rare case will it matter.

Even amongst smaller set of sequences, adaptation occurs as practice improves skill and makes it become second nature. These optimizations like smoothness and speed can only be obtained by exploring and sometimes crossing whatever boundary or tolerance there is.

“Some errors therefore have a function in maintaining a skill at its proper level, and they cannot be considered a separable category of events in a causal chain because they are integral parts of a feedback loop.”

Takeaways

- Occurrences, including accidents and incidents happen in a continuous, dynamic flow.

- They are only perceivable as individual events when the flow is decomposed.

- This decomposition, from the dynamic flow to individual events is where causal analysis can take place, but it is a very subjective decomposition. Different people are likely to create different events from the flow.

- Where people stop in analyzing a causal chain of decomposed events is very subjective. It can vary from individual to individual, team to team, etc…

- It also varies from purpose to purpose, which means one analysis isn’t necessarily useful for another purpose.

- Those doing the work are continually resolving the degrees of freedom they have and then taking action.

- Improvements in skill, including adaptation require being able to explore boundaries and tolerances and occasionally crossing them.

- This “error” in crossing the boundary can’t be separated from its feedback loop in a causal chain.

Subscribe to Resilience Roundup

Subscribe to the newsletter.